I was revisiting Curable recently. A question that really stuck out to me, which I’ve been revisiting recently:

“Is this movement dangerous for me?”

We our fear/danger wires get re-inforced over time, this question seems to help uncross the wires.

I was revisiting Curable recently. A question that really stuck out to me, which I’ve been revisiting recently:

“Is this movement dangerous for me?”

We our fear/danger wires get re-inforced over time, this question seems to help uncross the wires.

I recently came across Tavily, an AI-powered platform that aims to revolutionize the way we conduct research. Tavily automates the research process, promising to deliver comprehensive, accurate, and credible research results in a matter of seconds.

Tavily’s approach to research is quite impressive. You simply share what you want to research, and Tavily starts gathering information from multiple online trusted sources. It then organizes the information and provides you with a comprehensive research report within minutes. This process not only saves time but also ensures that you get the most accurate and credible information.

One of the things that caught my attention about Tavily is its flexibility. It can conduct any kind of research, regardless of the subject matter or niche. It isn’t perfect but it is dangerously good. And the team is amazing and super helpful.

My friend Keetu said something very deep recently, which I think is appropriate for Thanksgiving:

“Family Is Where Things Don’t Need to Make Sense”

Sometimes you get lucky on the first try.

(Don’t ask me how many times I completely struck out trying to improve this image.)

🙏 Thank you! 🙏

The big companies will never catch up.

They won’t be the first to know, even if they are the first to hear.

The tech scout will have a call with the business unit. This will take a few weeks to schedule.

If it’s really interesting, the business unit and the tech scout will have to write a report for the leadership team.

If that goes well, and assuming the CEO also sees something about it on social media, then a committee will be formed to investigate.

The committee will have to write a strategy, a timeline, a budget, and a risk assessment. Maybe even a policy.

No one in the company knows how to do this new thing, so they will have to hire a consultant.

They’ll need multiple competing bids first before they select a consultant. Then the consultant will have to write a report for the committee.

The committee will have to write a report for the leadership team.

The leadership team will have to write a report for the board.

The board will have to make a decision.

A team will have to be formed to implement the decision.

The committee will have to hire the team.

The team will have a lot of new blood, really excited to make a difference.

But before they can do anything, they will have to write a report for the committee.

By the time the budget is approved, the team will have gotten the message that they don’t actually need to do anything to continue to get paid.

But they will try to do something anyway. And it will take longer than expected. And it will cost more than expected. And it will be worse than expected.

The team will have to present it to the committee.

The committee will have to present it to the leadership team.

The leadership team will have to present it to the board.

At this point, no one will remember why they wanted to do this in the first place.

All the while, the small company will have been doing it…with just a few people who are really excited to make a difference, and AI agents to implement their vision.

Apologies for the delay, I’ve been walking on the edge of infinity. It has been quite consuming.

I’m still practicing my discernment.

Some key learnings:

Once a year, on the day of the Berkeley Half Marathon, I try to make a point to leave extra early to get to yoga, because they shut down my normal freeway exit.

This year, it didn’t matter - life had another plan for me. Right when I got between the two exits that were closed for the race, emergency vehicles started pushing through the traffic, and closed every single lane of the 80.

At first I was quite grumpy. Then the woman in the car behind me started to cheer on the runners - who were just a few feet away from us.

Then I remembered: I know exactly what to do in this situation. My Burning Man skills kicked in. I rolled down the window and pumped up the jams for the runners.

Sometimes you have to slow down to speed up.

Other times you realize you’re in a rabbit hole.

Today was a double graduation day. I graduated from both Mayo Oshin’s Build a ChatGPT Chatbot For Your Data and TREW Marketing’s Content Writing, Engineered.

I highly recommend both courses. I’m already using the skills I learned in both courses to improve my business. A few relevant links to what I’ve learned and completed:

Cheers!

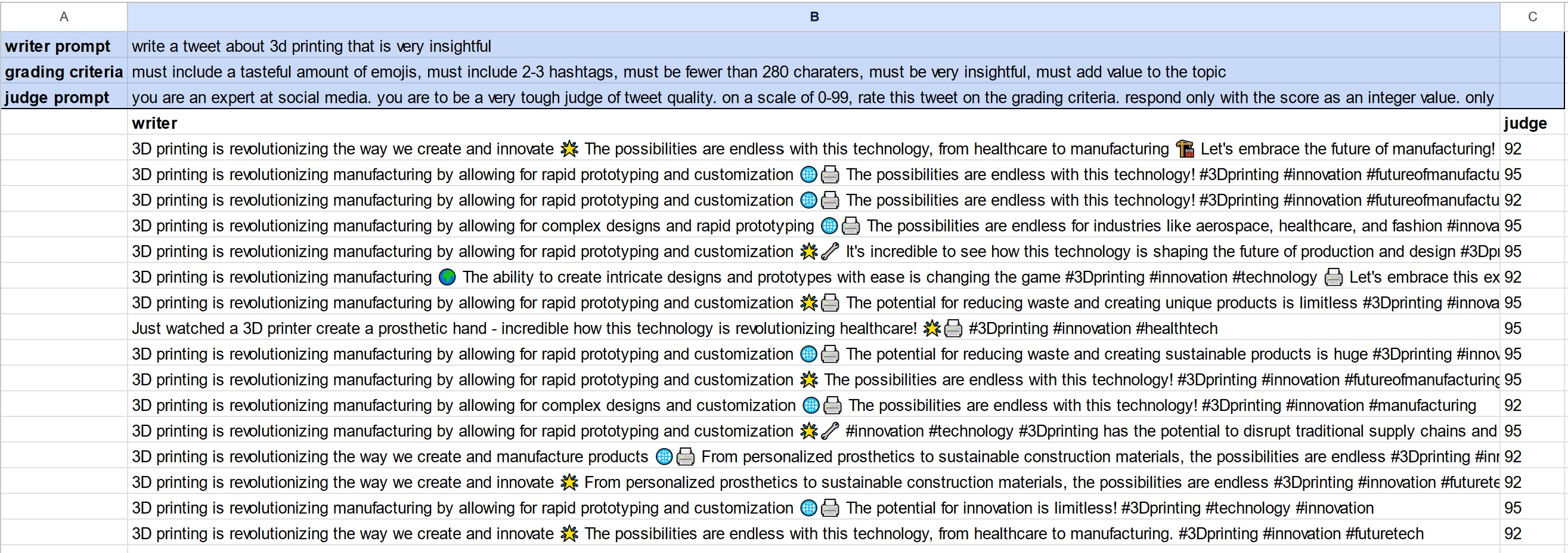

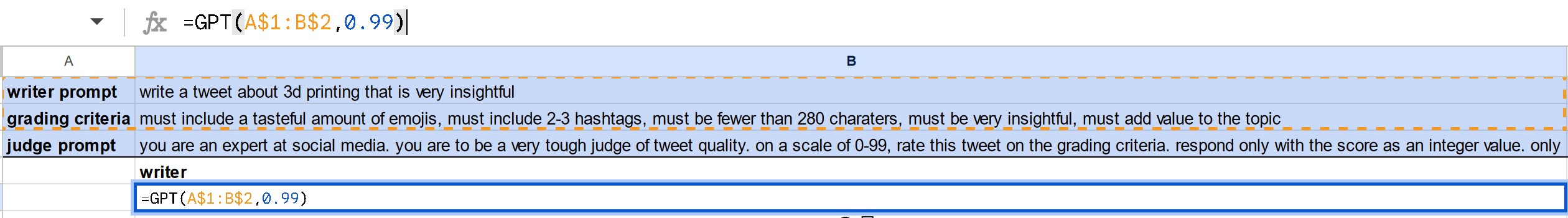

I’m developing some ideas around using ensembles of LLMs for specific tasks. Today I’m sharing a preview of the first “trivial” example, which is the foundation of linking arrays of LLMs to the concept of ensembles from statistical mechanics.

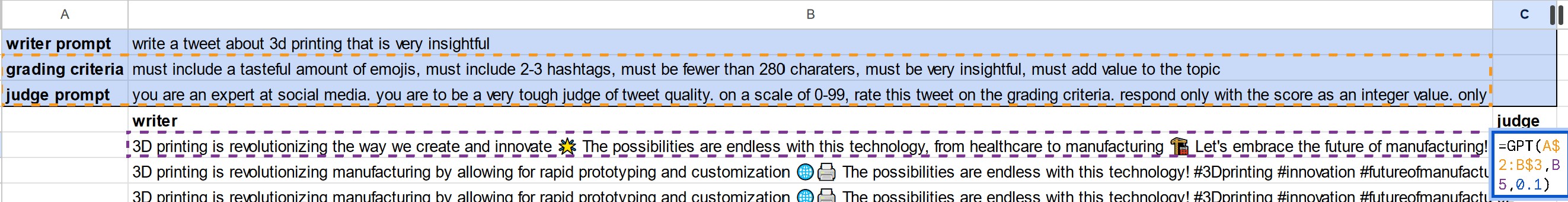

In this example, an ensemble of LLMs are called with the same prompt, but “high” temperature (of 0.99). There is a “prompt” and a “grading criteria” that are used to create the full prompt.

The only variance is the from the “temperature”, which you can see doesn’t result in much variety.

Then the “judge” is asked to rank the results, based on the grading criteria, with the judge having a low temperature (0.1):

The output is not impressive. But from here we can start to build up to more interesting examples.

Much more to come on this topic. Stay tuned. 📻